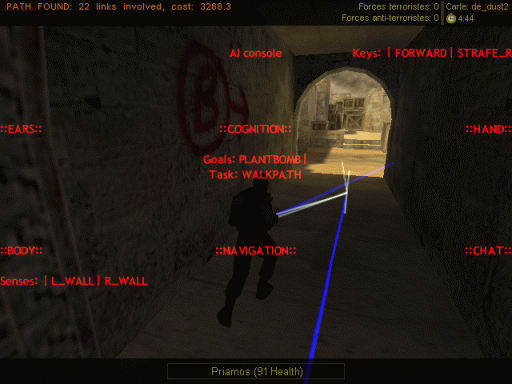

Another development screenie, this one rather talks for itself...

As you can see I'm still in the WalkPath / cognition system stuff; the WalkPath function gets better but really slowly, because it's a really complicated one and honestly since I don't have much time to spend on it each day I prefer coding miscellaneous funny stuff, such as the AI console you see

This one enables me to display each part of the bot AI in real-time for the bot I am spectating. The left column is the sensing part (eyes, ears, body), the right one is the moving part (legs, hand, chat), the middle ones are the other ones I didn't know where to put

You can see how the cognition stuff will work BTW: the goal(s) and the task. Each bot can have one or several goal, and in order to get closer to one of them it picks up a task. Here the bot thinks "I must plant that damn bomb" and knows "I'm walking towards the freaky bomb spot (what for? to plant that damn bomb)".

Phew, it's exhausting.